Multi-sensor-Datenfusion für optische Inline-Inspektion

Veröffentlicht am 7. Dezember 2020 von TIS Marketing.

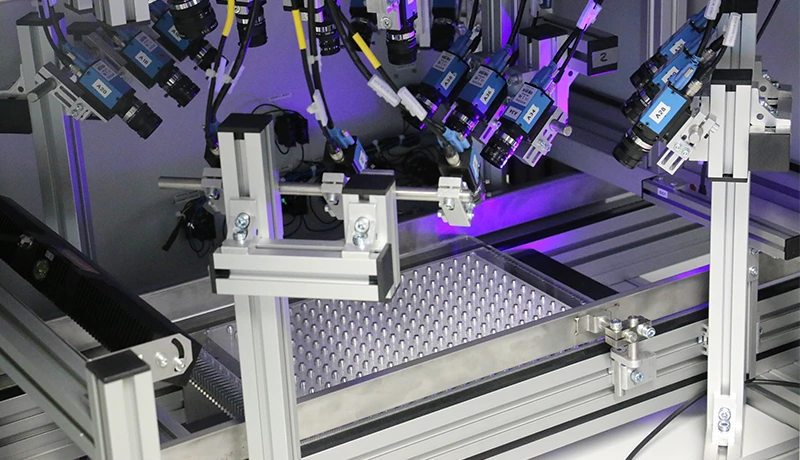

Die optische Inspektion ist der Eckpfeiler der meisten Arbeitsabläufe der Qualitätskontrolle. Wenn sie von Menschen durchgeführt wird, ist der Prozess jedoch teuer, fehleranfällig und ineffizient: eine 10%-20%ige Pseudoausschuss-bzw. Schlupfrate und daraus resultierende Produktionsengpässe sind keine Seltenheit. Unter dem Begriff IQZeProd (Inline Quality control for Zero-error Products) entwickeln Forscher des Fraunhofer IWU neue Inline-Monitoring-Lösungen, um Defekte bei verschiedenen Materialien wie Holz, Kunststoffen, Metallen und lackierten Oberflächen möglichst früh im Produktionsprozess zu erkennen. Das System fusioniert die Daten aus einer Vielzahl von Sensoren, um Struktur- und Oberflächenfehler zu erkennen, während die Komponenten die Produktionslinie durchlaufen. Ziel ist es, industrielle Fertigungsprozesse robuster und nachhaltiger zu gestalten, indem die Prozesssicherheit erhöht und die Fehlererkennung verbessert wird. Das Herzstück des Systems bilden das eigene Xeidana® Software-Framework der Forscher und eine Matrix von zwanzig Industriekameras. Die Forscher hatten sehr spezifische Kamerakriterien: Global-Shutter-Monochrom-Sensor, jitterarme Echtzeit-Triggerung, zuverlässige Datenübertragung bei sehr hohen Datenraten und einfache Integration in ihr Software-Framework. Die Wahl fiel auf GigE Vision-Standard Industriekameras von The Imaging Source.

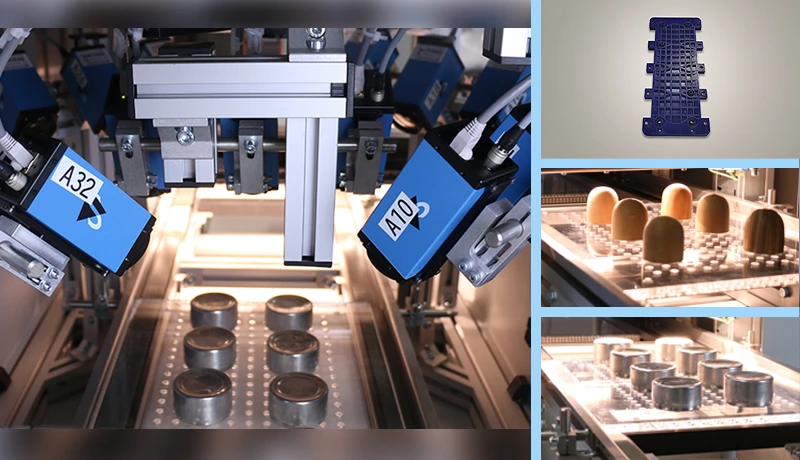

Obwohl Xeidanas Framework-Ansatz die nötige Flexibilität bietet, um Daten von optischen, thermischen, multispektralen, Polarisations- oder nicht-optischen Sensoren (z.B. Wirbelstrom) zu verarbeiten, werden viele Prüfaufgaben mit den von optischen Standardsensoren gelieferten Daten erledigt. Der Projektleiter, Alexander Pierer, kommentierte: "Oft nutzen wir eine Datenfusion, indem wir die kritischen Bauteilbereiche redundant abtasten. Diese Redundanz kann zum einen darin bestehen, dass wir ein und dieselbe Region unter verschiedenen Perspektiven erfassen, was das sogenannte manuelle Ausspiegeln der menschlichen Sichtprüfung nachbildet." Um die für diese Aufgaben erforderlichen visuellen Daten zu erfassen, richteten die Forscher eine Kameramatrix aus 20 TIS-Kameras ein: 19 Monochrom- und eine Farbkamera.

Monochrom-Sensoren: Optimal für die Defekterkennung

Aufgrund ihrer grundlegenden physikalischen Eigenschaften liefern monochrome Sensoren höhere Auflösung, verbesserte Empfindlichkeit und weniger Rauschen als Farbsensoren. Pierer weißt auf hin: "Monochrom-Sensoren sind meist ausreichend, um Defekte, die sich als Helligkeitsunterschiede auf der Oberfläche darstellen zu detektieren. Die Farbinformation ist für uns Menschen sehr wichtig, in technischen Anwendungen liefert die Farbinformation sehr oft keine zusätzlichen Informationen. Die Farb-Kamera setzen wir zur Farbtonanalyse, mittels HSI-Transformation ein, um Farbabweichungen, die auf einen fehlerhaften, möglicherweise zu dünnen, Lackauftrag hindeuten, zu erkennen."

Die Aufgabenstellung und die kurzen Belichtungszeiten führten dazu, dass die Ingenieure sehr genaue Kamerakriterien hatten: Pierer fährt fort: "Hauptauswahlkriterien waren Global-Shutter und eine echtzeitfähige Triggerung mit sehr geringem Jitter, da wir die Teile in der Bewegung mit sehr kurzen Belichtungszeiten im 10 µs-Bereich aufnehmen. Dabei müssen die Belichtung zwischen Kamera und der ebenfalls über Hardware-Eingang getriggerten Lumimax-Beleuchtung (iiM AG), absolut synchron laufen. Wir haben hier einige Ihrer Wettbewerber getestet, wobei viele hier Probleme hatten. Wichtig war uns noch dass man die ROI bereits in der Firmware der Kamera auf relevante Bereiche eingrenzen konnte, um die Netzwerklast für die Bildübertragung zu optimieren. Weiterhin sind wir auf eine zuverlässige Datenübertragung bei sehr hohen Datenraten angewiesen. Da die Teile im Durchlauf geprüft werden, dürfen Bildausfälle oder fragmentierte Bildübertragungen nicht auftreten."

Motorisierte Zoomkameras ermöglichen eine schnelle FOV Anpassung

Im Laufe des Projekts baute das Team mehrere Systeme, sowohl für den industriellen Einsatz als auch für Demonstrations- und Testzwecke. In der typischen industriellen Umgebung, in der die zu prüfenden Komponenten konstant bleiben, erfüllten die Fixfokus-Industriekameras die Anforderungen des Teams. Für das Demo-/Testsystem verwendeten die Forscher jedoch eine Reihe verschiedener Komponenten, darunter Metallteile, Holzrohlinge und 3D-gedruckte Kunststoffteile, die Kameras mit einem einstellbaren Sichtfeld (FOV) erforderten. Die Monochrom-Zoomkameras von The Imaging Source mit integriertem, motorisiertem Zoom boten diese Funktionalität.

Massively Parallel Processing hält Schritt mit der Datenübertragung und ermöglicht Deep Learning

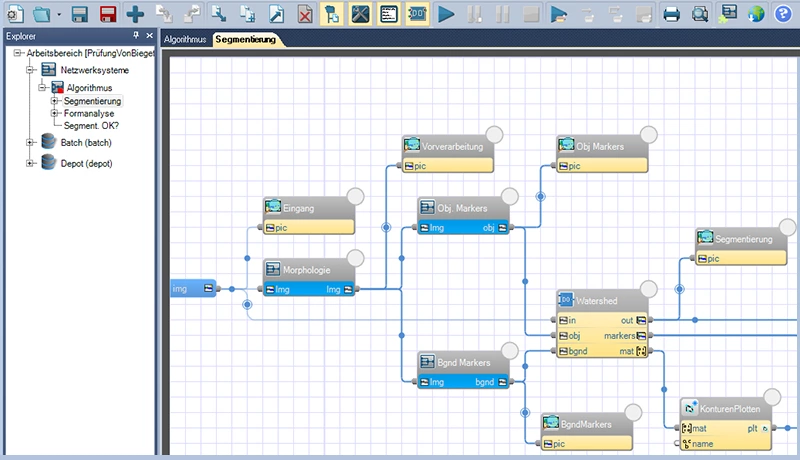

Mit über 20 Sensoren unterschiedlicher Art, die Daten an das System liefern, ist mit einem Datenstrom in der Größenordnung von 400 MB/s zu rechnen. Pierer erklärt: "Das System ist für Durchlaufgeschwindigkeiten von bis zu 1 m/s ausgelegt. [...] Alle drei bis vier Sekunden erzeugt die 20-Kamera-Matrix 400 Bilder. Hinzu kommen die Daten der hyperspektralen Zeilenkamera und des Rauheitsmesssystems, die alle innerhalb der Zykluszeit von 10 Sekunden verarbeitet und ausgewertet werden müssen. Um diese Anforderung zu erfüllen, ist eine so genannte massiv-parallele Datenverarbeitung erforderlich, die 28 Rechenkerne (CPU) und den Grafikprozessor (GPU) umfasst. Diese Parallelisierung ermöglicht es dem Inspektionssystem, mit dem Produktionszyklus Schritt zu halten und ein inline-fähiges System mit 100%iger Kontrolle zu liefern". Der modulare Framework-Ansatz von Xeidana wurde für moderne Mehrkernsysteme optimiert, um eine massiv parallele Verarbeitung zu ermöglichen. Der modulare Framework-Ansatz von Xeidana ermöglicht Anwendungsingenieuren die schnelle Realisierung eines massiv parallelen, anwendungsspezifischen Qualitätskontrollprogramms unter Verwendung eines Systems von Plug-Ins, die über eine Vielzahl von Bildverarbeitungsbibliotheken um neue Funktionalitäten erweitert werden können.

Die Datenfusionsfähigkeiten des Systems können auf verschiedene Weise genutzt werden, je nachdem, welche Informationen die besten Ergebnisse liefern. Zusätzlich zu den eher standardmäßigen Inspektionsaufgaben der industriellen Bildverarbeitung arbeitet das Forscherteam derzeit an der Integration anderer nicht-destruktiver Prüftechniken wie 3D-Vision sowie zusätzlicher Sensoren aus dem nicht sichtbaren Spektrum (z.B. Röntgen, Radar, UV, Terahertz) zur Erkennung anderer Arten von Oberflächen- und Innendefekten.

Da Xeidana eine massiv parallele Verarbeitung unterstützt, lassen sich Deep-Learning-Techniken auch auf die Fehlererkennung von Komponenten anwenden, deren Prüfkriterien nicht eindeutig quantifiziert oder definiert sind. Pierer stellt klar: "Diese Methoden sind besonders wichtig für organische Komponenten mit einer unregelmäßigen Textur, wie Holz und Leder, sowie für Textilien". Da Techniken des maschinellen Lernens in bestimmten Kontexten manchmal schwierig anzuwenden sind (z.B. begrenzte Nachvollziehbarkeit der Klassifizierungsentscheidung und die Unfähigkeit, Algorithmen bei der Inbetriebnahme manuell anzupassen), fügt Pierer hinzu, "Wir setzen daher bei unseren Projekten meist auf klassische Bildverarbeitungsalgorithmen und statistische Methoden der Signalverarbeitung. Erst wenn wir hier an Grenzen stoßen, weichen wir auf maschinelles Lernen aus."

Danksagung: Die The Imaging Source Europe GmbH ist aktives Mitglied des Industriearbeitskreises des Projektes IQZeProd und steht im engen fachlichen Austausch mit den Forschungspartnern. Das IGF-Vorhaben IQZeProd (232 EBG) der Forschungsvereinigung Deutsche Forschungsvereinigung für Mess-, Regelungs- und Systemtechnik e.V. - DFMRS, Linzer Str. 13, 28359 Bremen wurde über die AiF im Rahmen des Programms zur Förderung der industriellen Gemeinschaftsforschung (IGF) vom Bundesministerium für Wirtschaft und Energie aufgrund eines Beschlusses des Deutschen Bundestages gefördert. Auf die Verfügbarkeit des Schlussberichtes des IGF-Vorhabens 232 EBG für die interessierte Öffentlichkeit in der Bundesrepublik Deutschland wird hingewiesen. Bezugsmöglichkeiten für den Abschlussbericht sind: Die Deutsche Forschungsvereinigung für Meß-, Regelungs- und Systemtechnik e.V. - DFMRS, Linzer Str. 13, 28359 Bremen und das Fraunhofer IWU, Reichenhainer Straße 88, 09126 Chemnitz. Gefördert durch Bundesministerium für Wirtschaft und Energie aufgrund eines Beschlusses des Deutschen Bundestages

Danksagung: Die The Imaging Source Europe GmbH ist aktives Mitglied des Industriearbeitskreises des Projektes IQZeProd und steht im engen fachlichen Austausch mit den Forschungspartnern. Das IGF-Vorhaben IQZeProd (232 EBG) der Forschungsvereinigung Deutsche Forschungsvereinigung für Mess-, Regelungs- und Systemtechnik e.V. - DFMRS, Linzer Str. 13, 28359 Bremen wurde über die AiF im Rahmen des Programms zur Förderung der industriellen Gemeinschaftsforschung (IGF) vom Bundesministerium für Wirtschaft und Energie aufgrund eines Beschlusses des Deutschen Bundestages gefördert. Auf die Verfügbarkeit des Schlussberichtes des IGF-Vorhabens 232 EBG für die interessierte Öffentlichkeit in der Bundesrepublik Deutschland wird hingewiesen. Bezugsmöglichkeiten für den Abschlussbericht sind: Die Deutsche Forschungsvereinigung für Meß-, Regelungs- und Systemtechnik e.V. - DFMRS, Linzer Str. 13, 28359 Bremen und das Fraunhofer IWU, Reichenhainer Straße 88, 09126 Chemnitz. Gefördert durch Bundesministerium für Wirtschaft und Energie aufgrund eines Beschlusses des Deutschen Bundestages