Dynamic Range in Machine Vision Sensors

In industrial imaging, the primary challenge is rarely just capturing enough light, but rather managing extreme contrast. If you are inspecting a highly reflective machined aluminum cylinder that features a matte black rubber O-ring, your camera must process blinding highlights and deep shadows simultaneously. Dynamic range defines the camera's ability to do exactly this without blowing out the bright details to pure white or losing the dark details to black noise. Understanding the physics of dynamic range allows system integrators to specify the right sensor architecture for high-contrast environments like welding inspection, semiconductor manufacturing, and outdoor traffic monitoring.

The physics of dynamic range: Buckets and noise

To understand dynamic range, it helps to visualize a single pixel as a bucket collecting rain (photons).

The absolute maximum amount of water the bucket can hold before overflowing is the pixel's Full Well Capacity (FWC). If the pixel collects more photons than its FWC, it saturates, and the resulting image data is clipped to pure white, permanently destroying any geometric detail in that bright area.

However, the bucket is never perfectly clean. Even in absolute darkness, the sensor's electronics generate a baseline level of electrical interference known as Read Noise. This noise acts like a layer of murky sludge at the bottom of the bucket. Any incoming light signal that is weaker than this noise floor is impossible to measure accurately.

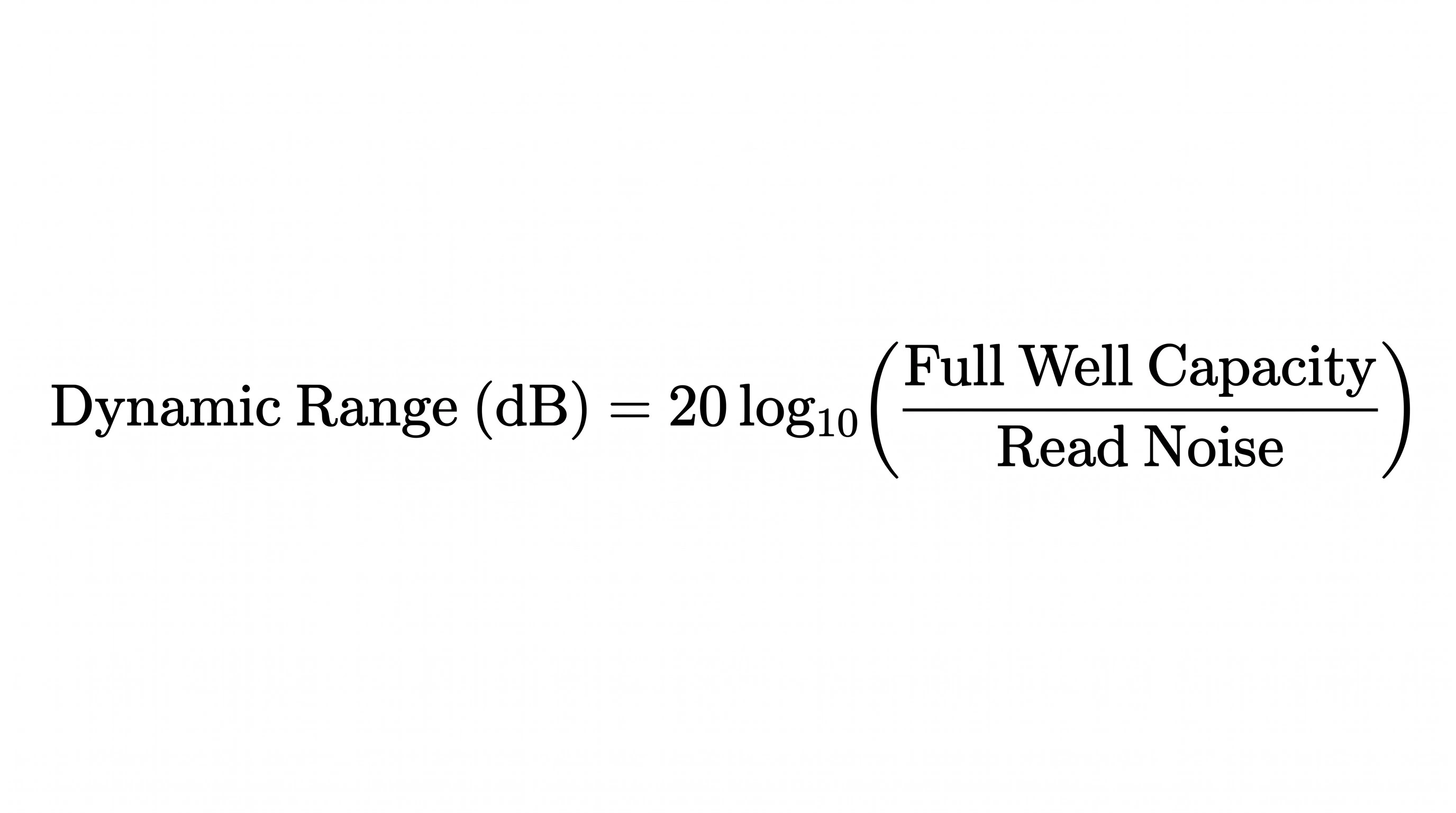

A sensor's dynamic range is the usable space between that noise floor and the saturation point. It is mathematically calculated as a ratio and expressed in decibels (dB):

To increase dynamic range, sensor manufacturers must either engineer a larger bucket (higher full well capacity) or reduce the sludge at the bottom (lower read noise).

To increase dynamic range, sensor manufacturers must either engineer a larger bucket (higher full well capacity) or reduce the sludge at the bottom (lower read noise).

Dynamic range vs. bit depth: The ruler analogy

Engineers often confuse a camera's dynamic range with its bit depth (e.g., 8-bit vs. 12-bit output), but they measure two completely different things.

Imagine dynamic range as the total physical length of a ruler. It defines the absolute distance from the darkest shadow to the brightest highlight. Bit depth represents the number of tick marks printed on that ruler.

An 8-bit output divides that ruler into 256 distinct steps of gray, while a 12-bit output divides the exact same ruler into 4,096 steps. Upgrading from an 8-bit to a 12-bit camera format does not magically increase your sensor's physical dynamic range; it simply gives the machine vision software finer, smoother gradients between the absolute black and absolute white limits.

How industrial cameras achieve High Dynamic Range (HDR)

When standard sensor physics cannot cover the contrast required by an application, camera manufacturers employ specific hardware and software techniques to extend the dynamic range.

|

HDR Technique |

How it works |

Best used for |

|

Large Pixel Pitch |

Physically larger pixels naturally have a massive Full Well Capacity, allowing them to absorb extreme highlights without saturating, while maintaining low noise. |

High-speed, high-contrast inspection where motion artifacts must be avoided entirely. |

|

Multi-Exposure HDR |

The camera rapidly captures two frames: one short exposure for the highlights, one long exposure for the shadows and merges them in hardware. |

Stationary parts or outdoor ITS (Intelligent Traffic Systems) where the target is relatively predictable. |

|

Dual Conversion Gain (DCG) |

The sensor features two separate readout circuits per pixel with different amplification levels, combining high-gain (low noise) and low-gain (high saturation) simultaneously. |

Highly dynamic environments requiring single-frame HDR without the risk of spatial distortion. |

Managing dynamic range with optical solutions

If a camera upgrade is not possible, system integrators often solve dynamic range problems mechanically before the light ever hits the sensor. Using polarized light sources paired with polarizing lens filters can drastically cut the specular glare off metallic or plastic surfaces. This artificially lowers the peak brightness of the scene, compressing the contrast into a range that a standard machine vision sensor can easily measure.

Frequently asked questions

Yes. Because the sensor must physically expose, read out, and mathematically combine multiple frames to produce a single HDR image, your maximum achievable frame rate will drop significantly. It also introduces the risk of "ghosting" artifacts if the inspected part moves rapidly between the first and second exposure.

Lowering the exposure time will prevent the bright highlights from clipping to pure white, but it physically shifts your entire exposure window downward. This means your shadows will plunge into the noise floor and become pitch black. It does not increase the dynamic range; it simply forces you to choose which end of the light spectrum you are willing to sacrifice.

Standard industrial CMOS sensors typically offer a dynamic range between 60 dB and 75 dB. This is more than sufficient for standard factory automation with controlled lighting. Highly specialized HDR sensors designed for welding or outdoor automotive applications can push well beyond 90 dB or even 120 dB.