Quantum Efficiency (QE) in Machine Vision

Quantum efficiency (QE) is the precise measure of how effectively an image sensor converts incoming light into an electrical signal. It is defined as the percentage of photons hitting the sensor's active surface that successfully generate measurable electrons. If a sensor has a QE of 75% at a specific wavelength, it means 75 out of every 100 photons are converted into electrons, while the remaining 25 are either reflected away or absorbed as heat. In industrial imaging, maximizing this metric is critical because it dictates how well a camera performs under very short exposure times or in light-constrained applications.

The physics of light conversion

When light enters a lens and strikes the silicon of a CMOS sensor, it transfers its energy. If an incoming photon has sufficient energy, it interacts with the silicon to knock an electron loose, creating an electron-hole pair. The pixel's micro-circuitry then collects these electrons to form the voltage that becomes your digital image.

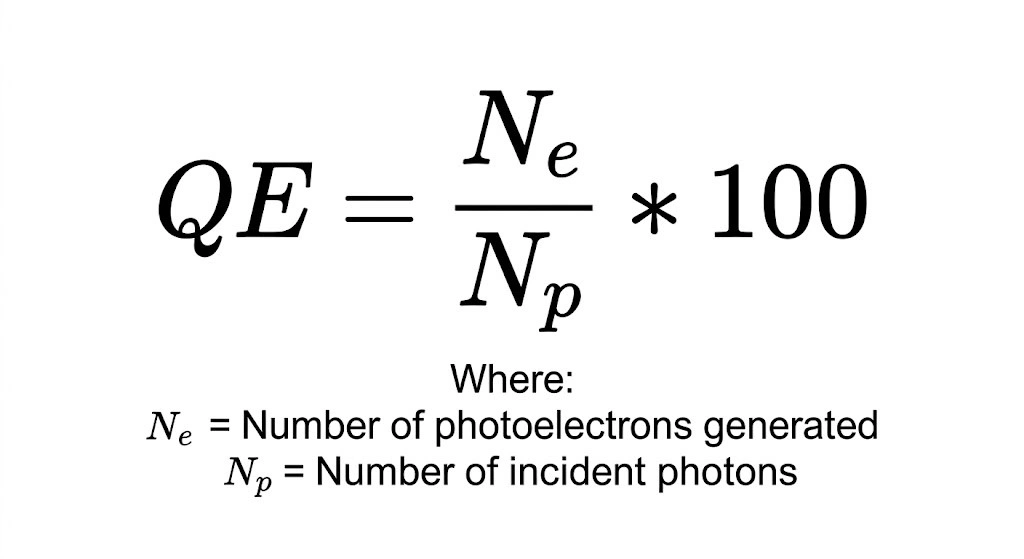

The underlying formula for this conversion is straightforward:

However, achieving high efficiency requires sophisticated silicon engineering. The metal wiring and circuitry on the sensor surface can physically block photons from reaching the light-sensitive photodiode. To combat this, manufacturers use micro-lenses, tiny optical domes placed over every individual pixel, to focus incoming light directly into the active pixel area, dramatically increasing the overall QE. Back-illuminated (BSI) sensor designs, like Sony's STARVIS series, take this further by moving the wiring behind the photodiode, allowing an unobstructed path for the photons.

However, achieving high efficiency requires sophisticated silicon engineering. The metal wiring and circuitry on the sensor surface can physically block photons from reaching the light-sensitive photodiode. To combat this, manufacturers use micro-lenses, tiny optical domes placed over every individual pixel, to focus incoming light directly into the active pixel area, dramatically increasing the overall QE. Back-illuminated (BSI) sensor designs, like Sony's STARVIS series, take this further by moving the wiring behind the photodiode, allowing an unobstructed path for the photons.

The wavelength dependency curve

Quantum efficiency is not a single, flat number. It is a curve that varies significantly depending on the wavelength of the light.

Most standard industrial CMOS sensors achieve their peak quantum efficiency in the green region of the spectrum (around 525 nm). As wavelength increases toward the near-infrared (NIR) band (above ~850 nm), silicon becomes less efficient at absorbing incoming photons. Many photons at these wavelengths penetrate too deeply or pass through the active region without generating charge carriers, which is why standard sensors lose sensitivity in the NIR range. When reviewing a camera datasheet, the headline "75% QE" almost always refers to the absolute peak of this curve. You must check the sensor's spectral response chart to determine its true efficiency for the specific color of light you are using.

Quantum efficiency in machine vision applications

System integrators rely on high-QE cameras when they are forced to restrict light. Whether constrained by the speed of a conveyor belt or the physical limits of an enclosure, high efficiency is the solution to light-constrained applications.

|

Application |

Lighting constraint |

Why high QE is required |

|

High-speed sorting |

Sub-millisecond exposure times |

To freeze motion, the camera shutter is open for a fraction of a millisecond. High QE captures enough data in that tiny window to perform precise edge detection. |

|

Fluorescence microscopy |

Weak, low-intensity emissions |

Biological samples often emit very faint light. High QE ensures the sensor captures these weak signals before they are lost to read noise. |

|

Agricultural inspection |

NIR bandpass filtering |

Inspecting fruit for subsurface bruising requires NIR light. Standard sensors have low QE in the NIR band, often requiring specially optimized thick-silicon sensors. |

Why do monochrome sensors have higher quantum efficiency than color sensors?

When specifying a camera for an inspection task, engineers often default to monochrome unless color data is strictly required. The reason is directly tied to quantum efficiency.

To create a color image, manufacturers apply a Bayer filter mosaic over the sensor. Each pixel is covered by a microscopic red, green, or blue filter. A pixel covered by a red filter physically absorbs and blocks green and blue photons, preventing them from reaching the silicon.

Because a monochrome sensor lacks this filter array, every pixel receives the full spectrum of available light. The absence of physical obstruction means a monochrome camera will always possess a significantly higher overall quantum efficiency and superior absolute sensitivity compared to the exact same sensor model built with a color array.

Key specifications to evaluate

Under the EMVA 1288 standard, QE is evaluated alongside several other metrics to define a camera's true sensitivity:

|

Specification |

Relationship to QE |

|

Absolute Sensitivity Threshold (AST) |

The minimum number of photons required to get a signal equivalent to the camera's noise. High QE lowers the AST. |

|

Signal-to-Noise Ratio (SNR) |

Higher QE directly increases the signal, improving the SNR for a cleaner, more reliable image. |

|

Readout Noise |

If a sensor has high QE but also high readout noise, the advantage of capturing more photons is negated in the shadows. |

Frequently asked questions

For standard industrial CMOS and CCD sensors operating under linear conditions, no. One photon cannot generate more than one electron. While multi-photon absorption can occur at extreme intensities (such as with high-power lasers), and specialized scientific sensors use internal gain to multiply electrons, these fall outside standard machine vision hardware limits.

Not automatically. While high QE is crucial, the camera must also have low dark current and low readout noise. If a sensor converts light efficiently but generates too much electrical noise during the readout process, the resulting image will still be poor in low-light conditions.

Look at the camera's spectral response curve on the datasheet. If the sensor's QE peaks at 525 nm, pairing it with green LED illumination will yield the most efficient light collection, allowing you to use shorter exposure times or lower the lens aperture for greater depth of field.